GPU Cloud Provider · Livingston, New Jersey, USA

CoreWeave

CoreWeave is a hyperscaler focused on AI and high-performance computing, leveraging advanced Nvidia technologies such as the GB200 Grace Blackwell chips and BlueField DPUs to provide a cloud platform that excels in large-scale AI workloads. The platform is designed for efficiency, scalability, and performance, specifically tailored to meet the intensive demands of modern AI processes.

GPU Marketplace

Company Profile

Infrastructure

Compute & Deployment

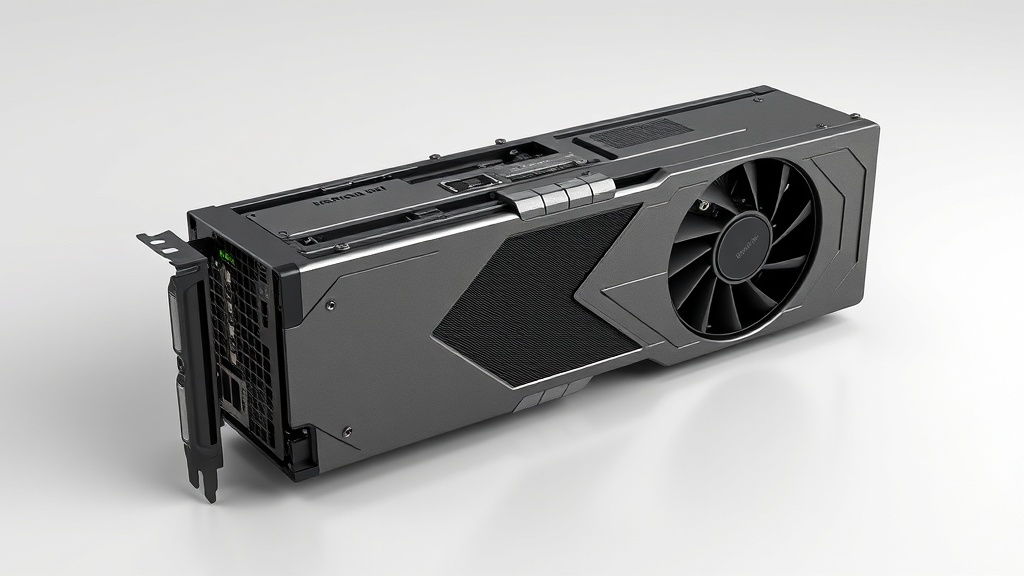

GPU Hardware

Pricing Model

Performance & Scaling

Developer Experience

Security & Compliance

Data Center Locations

Coverage

Compliance Regions

Key Strengths

Known Limitations

Additional Information

Support Options

["24/7 email and phone support","Dedicated technical account managers"]

Community

Slack community for developers; active presence on GitHub and technical forums

Green Energy

Committed to renewable energy sourcing; works with green energy providers for data center operations

Core Proposition

GPU-accelerated cloud purpose-built for AI/ML workloads, offering Kubernetes-native infrastructure with the largest selection of NVIDIA GPUs at scale