GPU Cloud Provider · New Jersey, USA

Vultr

Vultr offers high-performance cloud GPU solutions globally, with extensive support for AI, ML, and HPC applications. They provide a cost-efficient, scale-ready cloud infrastructure featuring AMD and NVIDIA GPUs, simplified into user-friendly services with predictable pricing.

GPUs

5

Founded

2014

Countries

15

Data Centers

24

Uptime SLA

99.9%

Team Size

201-1000

GPU Marketplace

NVIDIA GB300 NVL72On-Demand

NVIDIA HGX B300On-Demand

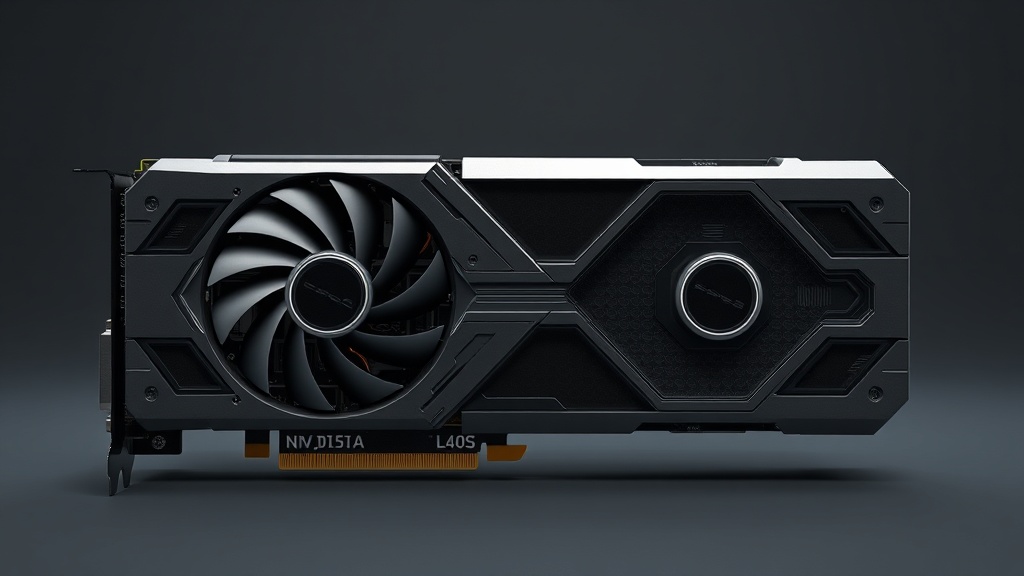

NVIDIA L40S L40SOn-Demand

AMD Instinct MI300X MI300XOn-Demand

NVIDIA A100 80GB SXMOn-Demand

Company Profile

Company TypeScale-up

Provider TypeCloud Provider

Founded2014

HeadquartersNew Jersey, USA

Legal EntityVultr Holdings, LLC

FundingPrivate

Team Size201-1000

Infrastructure

GPU FleetAMD Instinct MI300X, AMD Instinct MI325X, NVIDIA A100 80GB, NVIDIA A16

Network FabricAdvanced CDN and network scalability with global coverage

ConnectivityData center-specific, ranging typically in high Gbps to Tbps, across a global scale

StorageBlock storage, File system storage, Object storage

Data Center TierTier 3

Bare MetalYes

AvailabilityGA (General Availability)

StartupEnterpriseDeveloperResearch

Compute & Deployment

On-DemandYes

Spot / InterruptibleNo

Reserved InstancesYes (monthly and longer-term committed pricing available)

Bare MetalYes (bare metal GPU servers available)

VM-BasedYes

Container-BasedYes (Docker)

KubernetesYes (managed Kubernetes via Vultr Kubernetes Engine)

Serverless GPUNo

Spin-Up Time2-5 minutes

TerraformYes (official provider on HashiCorp registry)

GPU Hardware

Latest GenH100 SXM, MI300X, MI325X

Legacy SupportA100 PCIe, A16

Multi-GPU NodesYes (up to 8x per node)

Max GPUs/Node8

NVLinkYes (NVLink on H100 SXM nodes)

InfiniBandYes (HDR 200Gbps on clustered nodes)

PCIe vs SXMBoth PCIe and SXM

HGX PlatformYes (HGX H100 8-GPU)

Pricing Model

Per HourYes (primary billing unit)

Per MinuteNo

SubscriptionNo

Spot DiscountNo spot pricing

Public PricingYes

Hidden FeesIP address charges apply; additional fees for managed databases and object storage

Egress ChargesFree up to 1TB/month per instance; $0.01/GB thereafter

Pay-as-you-goYes

Credit SystemYes (prepaid credits available)

Performance & Scaling

Multi-Node TrainingLimited (manual setup, no managed orchestration disclosed)

Elastic ScalingManual only

Auto ScalingNo

InfiniBandNo (Ethernet only, specific speeds not disclosed)

NVSwitchNo

SLA99.9%

Perf IsolationPartial (virtual GPU instances, not bare metal)

Noisy NeighborPartial (virtual isolation, multi-tenant infrastructure)

Developer Experience

OnboardingDeploy in under 5 minutes via web UI or API; no approval process required

FrameworksCUDA, cuDNN, ROCm

SDK LanguagesPython, Go, Node.js, PHP, Ruby, Java

CLI ToolingFull CLI (vultr-cli) for instance management, deployment, and automation

JupyterVia SSH port forwarding or user-configured Jupyter on deployed instance

TemplatesPyTorch, TensorFlow, CUDA Toolkit

DocumentationComprehensive docs with tutorials, API reference, and community guides

API FeaturesCLI, SDK, REST API, Terraform provider

Security & Compliance

SecurityRegular security assessments and compliance with key regulations such as GDPR and HIPAA

ComplianceGDPR, HIPAA

Founded 2014 with 10+ years of cloud infrastructure experience30+ global data center locationsPublicly documented 99.99% uptime SLASOC 2 complianceGDPR compliantPCI DSS compliant

Data Center Locations

Coverage

CountriesUnited States, Germany, Netherlands, United Kingdom, France, Sweden, Poland, Spain, Japan, South Korea, Singapore, Australia, India, Brazil, Canada

CitiesNew Jersey, Chicago, Dallas, Los Angeles, Seattle, Miami, Atlanta, Silicon Valley, Toronto, São Paulo, Amsterdam, Frankfurt, London, Paris, Stockholm, Warsaw, Madrid, Tokyo, Osaka, Seoul, Singapore, Sydney, Mumbai, Tel Aviv

Multi-Region FailoverYes (manual)

Latency TiersStandard cloud latency

North AmericaEuropeAsia-PacificSouth AmericaAfrica

Compliance Regions

EU Data ResidencyYes (Frankfurt, Amsterdam, London, Paris, Stockholm, Warsaw, Madrid)

US Gov CloudNo

India RegionYes (Mumbai)

Datacenter Locations

Key Strengths

AMD MI300X and MI325X availability — among first cloud providers to offer MI325X

Competitive per-hour pricing with no long-term commitment required

Global footprint across 30+ data center locations

Simple self-service onboarding with no sales process

Broad API and CLI support for infrastructure-as-code workflows

Known Limitations

GPU availability can be limited and subject to stock constraints

No spot/preemptible GPU pricing

Less mature AI/ML platform tooling compared to hyperscalers

No built-in model marketplace or managed ML pipelines

Limited enterprise-grade SLA customization for GPU workloads

Additional Information

Support Options

24/7 support through customer service portals and community resources

Community

Active community forums, Discord server, and GitHub presence; documentation community contributions accepted

Core Proposition

Globally distributed cloud infrastructure with straightforward pricing and broad GPU availability including high-end AMD Instinct accelerators for AI/ML workloads.

Payment Methods

Credit CardPayPalWire TransferCrypto (Bitcoin)

Last updated March 2026. Information subject to change.