NVIDIA · August 2023

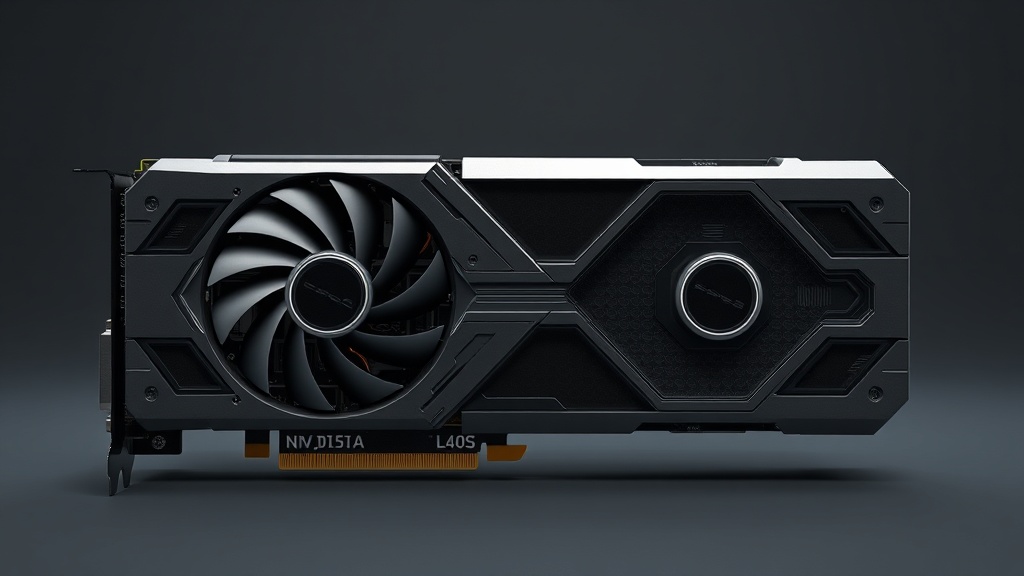

L40S

L40S

The NVIDIA L40S is a high-performance GPU designed for datacenter environments, targeting AI workloads, graphics rendering, and virtualization. It is part of the Ada Lovelace architecture, offering enhanced performance and efficiency over previous generations. The L40S is tailored for enterprise applications, providing robust support for AI and graphics-intensive tasks.

Provider Marketplace

Compute Performance

Architecture

Memory & VRAM

Connectivity & Scaling

Virtualization

Power & Efficiency

Physical Design

Thermals & Cooling

Software Ecosystem

Server & Deployment

System Compatibility

Benchmarks & Throughput

Structured Sparsity

Supported (up to 2x vs dense)

Transformer Throughput

Supported (Transformer Engine)

Multi-GPU Scalability

Scaling Efficiency

Scaling Characteristics

Workload Readiness

LLM Training

The L40S, based on the Ada Lovelace architecture, is suitable for training models up to 70B parameters in a single-node setup due to its high VRAM capacity. For 400B+ models, multi-node configurations are necessary.

LLM Inference

Highly efficient for inference tasks with strong token-per-second performance, leveraging 4th-gen Tensor cores and ample VRAM for KV cache management.

Vision Training

Excellent for vision training tasks, benefiting from Ada Lovelace's architecture enhancements and substantial VRAM, supporting large batch sizes and complex models.

Diffusion Models

Well-suited for diffusion models, offering fast training and inference capabilities due to its high computational throughput and advanced Tensor cores.

Multimodal AI

Capable of handling multimodal AI workloads efficiently, thanks to its robust architecture and large memory bandwidth, enabling seamless integration of diverse data types.

Reinforcement Learning

Effective for reinforcement learning, providing rapid model updates and environment interactions due to its high compute power and memory efficiency.

HPC / Simulation

Limited FP64 support typical of Ada Lovelace architecture, making it less ideal for double-precision HPC simulations but still viable for mixed-precision tasks.

Scientific Computing

Suitable for scientific computing tasks that can leverage mixed-precision calculations, though not optimal for those requiring extensive double-precision computations.

Edge Inference

Not ideal for edge inference due to higher TDP and larger form factor, better suited for data center deployments.

Real-Time Serving

Highly capable for real-time AI serving, with low latency and high throughput enabled by advanced Tensor cores and efficient architecture.

Fine-Tuning

Highly efficient for full fine-tuning tasks, leveraging its large VRAM to accommodate extensive model parameters and gradients.

LoRA Efficiency

Efficient for LoRA fine-tuning, allowing for parameter-efficient training with reduced VRAM requirements, making it versatile for various model sizes.

Market Authority

Cloud Adoption

NVIDIA confirms L40S available on Google Cloud and Oracle Cloud Infrastructure

Research Citations

Limited; a handful of preprints and technical reports reference L40S, but not widespread in peer-reviewed literature

Community Benchmarks

Some independent benchmarks published by Lambda Labs and select cloud providers

GitHub Support

Initial support in major deep learning frameworks (PyTorch, TensorFlow) via CUDA compatibility; no widespread L40S-specific optimizations

Enterprise Cases

NVIDIA and partners (e.g., Dell, Supermicro) have published solution briefs and customer references highlighting L40S in enterprise AI and visualization workloads

Key Strengths

The L40S excels in AI training and inference, offering significant performance improvements for deep learning models. It is also highly effective for graphics rendering and virtualization, making it a versatile choice for mixed workloads in datacenters.

Limitations

While the L40S offers impressive performance, it may come at a higher cost compared to other GPUs in its class. Availability might be limited due to high demand, and users should ensure their systems can accommodate its power and cooling requirements.

Also in the Lineup

Expert Insight

The L40S represents a strategic leap in AI compute. When comparing cloud providers, consider not just the hourly rate, but also the interconnect bandwidth (InfiniBand/NVLink) and regional availability which can significantly impact total cost of ownership for large-scale training.